End-to-end encryption

Data encrypted in transit (TLS 1.3) and at rest (AES-256) across every storage and processing surface – source files never travel unprotected.

Master your data from source to scale.

We collect, clean, structure, and annotate data so it becomes ready for AI training, product development, and advanced analytics – turning raw information into production-ready datasets that improve accuracy, reduce manual effort, and scale with your business.

Full-lifecycle data management accelerates the path from raw information to high-performing AI models. By synchronizing expert domain knowledge with rigorous validation, the process converts unstructured data into fully engineered, high-fidelity intelligence. This ensures a seamless transition into production, maximizing model accuracy while reducing the time spent on data troubleshooting.

Poorly labeled or inconsistent datasets create bottlenecks, lower accuracy, and compound the cost of fixing errors downstream. Here's how the quality bar actually moves the numbers.

Illustrative figures across customer engagements. Actual improvements vary by use case and baseline quality.

Accurate data leads to better decisions and more reliable outcomes.

Clear guidelines, measured inter-annotator agreement, and calibration reviews – consistency that scales.

Every file passes through 2–3 QA layers before it reaches your team.

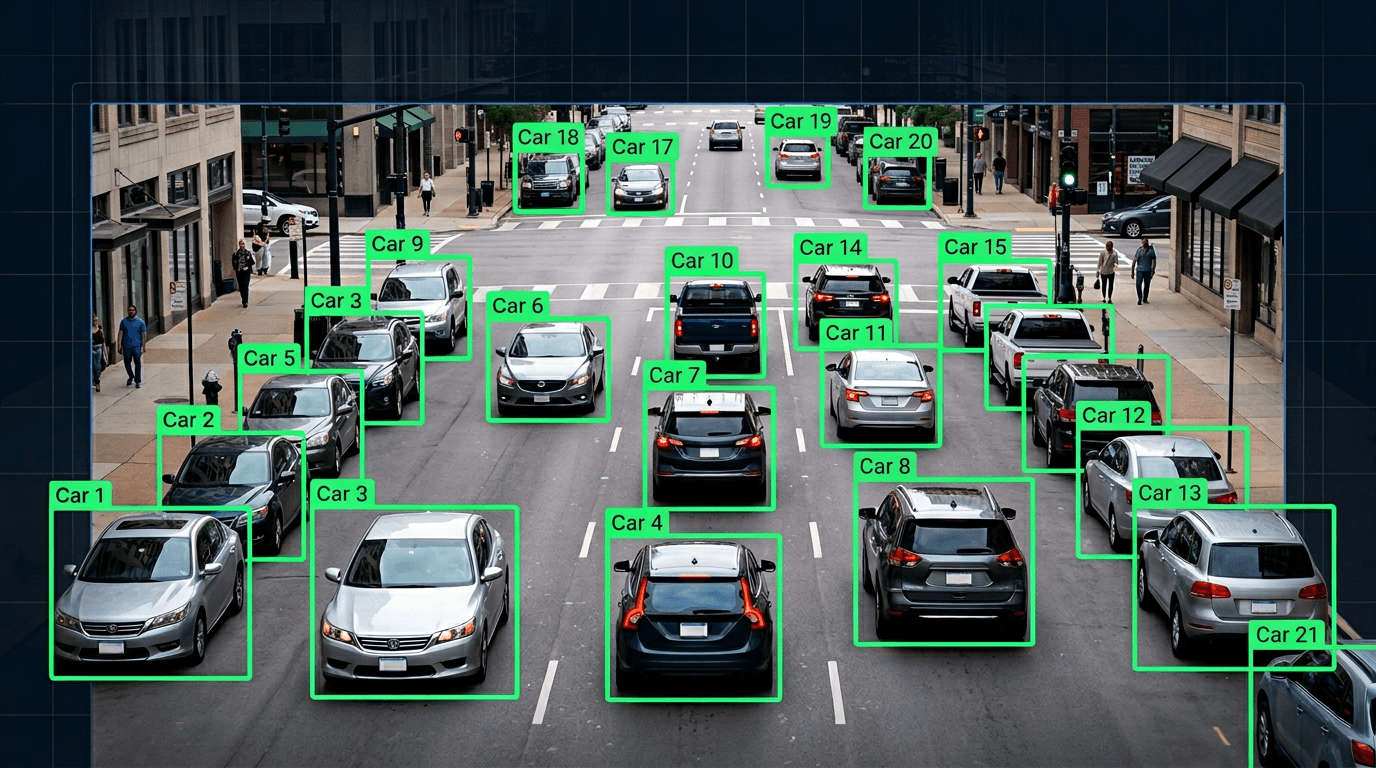

Pick a modality to preview how we structure the output. Every format ships with full guideline docs, inter-annotator agreement reports, and QA-approved ground truth.

Pixel-precise labelling across classification, detection, and segmentation tasks – the foundation for any computer-vision model.

Every engagement runs through the same discipline – so there's no ambiguity about where we are, what's coming, or how quality is measured.

Learn your goals, project scope, data types, and quality expectations.

Collect, clean, organize, and prepare the source data for annotation.

Define labeling rules, edge cases, and quality standards.

Annotators trained on guidelines, workflow, and output quality.

Data annotated to the approved guidelines and project specs.

2–3 review layers catch errors and maintain accuracy.

Validated output delivered in AI-ready formats.

Security, privacy, and governance are built into every engagement – from NDA-first onboarding through encrypted delivery and verified deletion.

Data encrypted in transit (TLS 1.3) and at rest (AES-256) across every storage and processing surface – source files never travel unprotected.

Mutual NDAs in place from day one, with annotators bound to per-project confidentiality and clean-desk policies before any data touches the floor.

Workflows aligned with GDPR, Vietnam PDPL, and Australia Privacy Act – PII handled under documented retention, residency, and disclosure rules.

Least-privilege access, MFA on every account, and project-scoped permissions – annotators only see the data their assigned task requires.

Every change tracked – who touched what, when, and why. Versioned guidelines and per-batch agreement scores ship with every delivery.

Data purged on contractually-agreed timelines with cryptographic erasure on completion – nothing lingers beyond what your engagement requires.

The discipline, the flexibility, and the numbers – here's what teams rely on us for, again and again.

Structured project management, versioned guidelines, and audit trails – ready for regulated industries.

From one-off labelled batches to ongoing annotation ops – scale up or down without contract drama.

Image, video, text, audio, document, and 3D – one team, one point of contact, every modality covered.

2–3 layers of review with measured inter-annotator agreement – every dataset ships with a quality report.

From 10K rows to 10M – consistent throughput with elastic annotation teams and batched QA.

Trusted by 60+ teams worldwide – we speak the language of both data engineers and ML researchers.

Four engagements across annotation, analytics, and data-product work – each with a measurable outcome behind the dataset.